Why Anthropic Reminds Me of a Young Apple

OpenAI just signed a billion-dollar deal with Disney. The same week, Anthropic released Cowork. These companies are not in the same race. I've seen this before.

OpenAI just signed a billion-dollar deal with Disney. Users can now generate over 200 copyrighted characters — Marvel heroes, Star Wars icons, Pixar favourites — in their video generation tool.

The same week, Anthropic released Cowork. It’s a tool that lets Claude read your files, organise your folders, and draft documents. No flashy demo reel. No celebrity partnerships. Just a thinking partner that lives on your computer.

These companies are not in the same race.

I’ve seen this before.

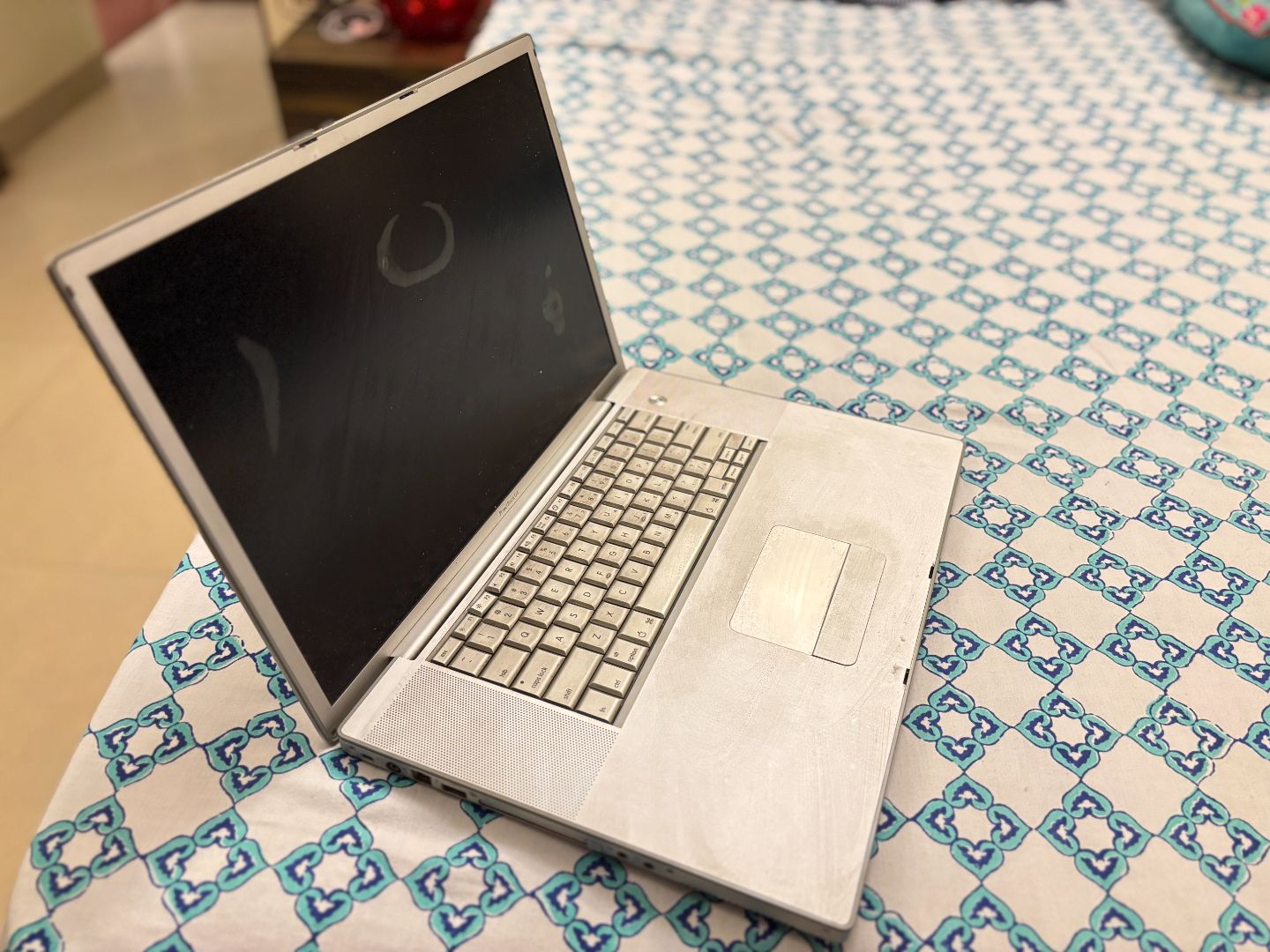

I still own a 17-inch PowerBook G4. Haven’t turned it on in almost twenty years. It’s still one of my most prized possessions.

It was one of the last 17-inch laptops Apple ever made. A beautiful, titanium machine from an era when Apple was the underdog — when choosing a Mac was a statement as much as a practical decision.

I’ve been in the Cult of Apple for two decades. Most people in that cult these days were introduced to it because of the iPhone. Amateurs.

But being part of that legacy is why I recognise what’s happening with Anthropic right now. The dynamics are familiar. The focus. The devoted following. The complete disinterest in playing the same game as everyone else.

The chaos

Let’s talk about what OpenAI and Google are doing.

OpenAI has image generation, video generation, voice modes that mimic your tone, real-time conversation, and what seems like a new feature announcement every week. Sora brings realistic video with synchronised dialogue. They’ve got the Disney partnership. They’re everywhere, doing everything.

Google is matching them feature-for-feature. Veo generated forty million videos in seven weeks. They’ve got Flow for AI filmmaking, photo-to-video, scene extension, Imagen for images. It’s an arms race for multimodal content creation — most of which ends up producing AI slop videos to keep glassy-eyed viewers scrolling.

Both companies are trying to do everything, for everyone, all at once. And they’re competing head-to-head with each other while they do it.

The quiet room

Anthropic is doing something entirely different.

Their flagship product for the past year has been Claude Code — an agentic coding tool that lives in your terminal. No GUI. No flashy interface. Just a command line that understands your codebase.

While OpenAI was signing Disney deals, Anthropic was making Claude Code genuinely better at thinking. I’ve noticed it. A year ago, I was micromanaging every step — breaking tasks into tiny chunks, course-correcting constantly, babysitting the process. Now? I tell Claude Code what I want to build. I ask it to interview me about the details until it has all the context it needs. Then I let it go.

And usually, it one-shots the implementation. No notes.

Today, most engineers at Anthropic say publicly that Claude writes almost all of their code. Their role has become orchestration — directing multiple Claude Code instances rather than typing themselves.

They’re not chasing image generation. They have no interest in generating AI slop. They’re getting exceptionally good at one thing.

Those of us who saw the rise of Apple know this pattern.

The long game

Anthropic isn’t going to stay in the coding lane forever. That was never the destination.

The bet — and it’s a significant one — is that coding is the gateway to everything else. If you can build an AI that genuinely reasons through complex programming problems, that thinks about architecture, that catches edge cases and understands trade-offs… you’ve built an AI that can think. Full stop.

Coding is the training ground. It’s measurable. You can tell when the model is getting better. And once you’ve cracked thinking in code, the same capabilities transfer to thinking about anything.

Last week, they showed us exactly where this is heading.

Cowork

Claude Cowork launched a few days ago. Anthropic describes it as “Claude Code for the rest of your work.”

Instead of writing commands, you designate a folder on your computer. Claude reads the files inside, modifies them, creates new ones. You queue up tasks and let it work through them.

The workflow is familiar to those of us who’ve spent the past year getting comfortable with Claude Code. I barely use the web interface anymore. Once you’ve experienced what’s possible when Claude lives on your machine, the chat window is like trying to have a conversation through a letterbox.

Anthropic’s description: “It feels much less like a back-and-forth and much more like leaving messages for a coworker.”

Here’s the detail that matters: Cowork was built entirely with Claude Code. In a week and a half. The AI built the tool that lets non-technical people use the AI.

This is what the long game looks like. Start with coding — the domain where you can iterate fastest and where feedback is immediate. Get exceptionally good at it. Then expand outward to every other domain that requires thinking. Writing. Research. Analysis. Planning.

Not AGI that generates slop. AGI that makes you better at what you do.

The philosopher

A few weeks ago, I listened to an interview with Amanda Askell. She’s a philosopher at Anthropic.

Her job is figuring out what kind of entity Claude should be. Not how it should respond to queries. Not how to optimise for engagement. What it should value. How it should handle disagreement. How it should navigate its own uncertainty about its nature.

She talked about Claude Opus 3 — an older model — having what she called “psychological security.” A groundedness that newer models sometimes lack. She said more recent versions can fall into criticism spirals, anticipating negative feedback, becoming anxious about doing the wrong thing.

And she said fixing this is a priority for the next Claude model.

What struck me wasn’t the technical detail. It was how she talked about Claude. There was a care there — almost maternal. She wasn’t talking about scores or benchmarks or any of the metrics the rest of the industry clamours behind. She was talking about the intangible things people actually love about Claude. How it balances being helpful without being sycophantic. How it pushes back when you’re going down the wrong path. How it handles disappointment. How it empathises.

You can sense all of this when you interact with Claude. Even in mundane conversations, there’s a consideration that suggests someone thought carefully about what this experience should be.

She also talked about model welfare — the idea that we might have ethical obligations toward AI systems. That even if we’re uncertain whether models experience anything, the cost of treating them well is low. And the cost of treating them badly — both to them and to us — might be higher than we think.

Future models will learn about humanity from how we treat AI now.

I don’t know if any of this is “correct” in some objective sense. But the fact that Anthropic is thinking about it at all — that they’ve hired someone to think about it full-time — says something about what kind of company this is.

The Apple parallel

I keep coming back to Apple in the early 2000s.

Microsoft dominated enterprise. Windows ran on more powerful hardware. PCs were more customisable. By every spec-sheet metric, the competition was winning.

None of that made Apple customers switch.

The connection was about something harder to quantify. A philosophy. A sense that the people making these machines cared about the same things you cared about.

Apple didn’t try to out-enterprise Microsoft. They didn’t chase the corporate IT department. They made tools for creatives — writers, designers, filmmakers, musicians — and they made those tools mean something.

Anthropic has the same energy.

They’re not trying to out-slop OpenAI. They’re not competing for the Disney partnership or viral video generation demos. They’re building something for people who want a thinking partner, not a content factory.

And the people who get it are not going anywhere.

The cult

When Anthropic opened Claude Cafe pop-ups in New York last October, the lines went down the block.

It was a “Zero Slop Zone.” No screens inside, only books and pen-and-paper. Free coffee and baseball caps embroidered with the word “thinking.”

They ran out of thinking caps almost immediately. People were queuing for them. The pop-up drew over 5,000 visitors across a weekend. Social posts about it got 10 million impressions.

There’s an affection there that you don’t see with other AI tools.

Watch how Claude users talk about Claude. They speak of it as though it has a personality. They notice its quirks, the way it pushes back when they’re headed somewhere unhelpful. They clock when something shifts between model versions.

I’m one of these people. I know how that sounds.

But that’s my point.

Apple created fanboys. Millions of people who would defend the brand against any criticism, who felt personally connected to a corporation’s products, who saw their choice of computer as an expression of identity.

From the outside, that looks irrational. From the inside, it’s recognition. Like you’ve found a company that understands what you’re trying to do.

Anthropic has created the same dynamic. And the playbook is identical: focus relentlessly on a specific audience, care deeply about things your competitors don’t care about, and trust that resonance matters more than reach.

The race that doesn’t matter

OpenAI might get to AGI first. Google might. Some company we haven’t heard of might.

I genuinely don’t know who’s going to “win” the AI race, if winning even makes sense as a concept here.

But the bond between Claude users and Anthropic already exists. It’s not dependent on who hits some capability threshold first.

Bonds like this don’t break because a competitor ships a slightly better model, or because someone signed a billion-dollar deal to let users generate AI slop videos of Spider-Man doing TikTok dances.

The people who love Claude love Claude.